- Blog

- Morph mod how to change into morphs

- Excel templates for mac

- Jason bourne movies streaming

- Download zoom cloud meeting for pc free

- Browser with flash player installed for power pc

- Install adobe flash player google chrome windows 10

- Default path on mac mini web server

- Kerbal space program free download 2018

- Download amd graphics driver for windows 7 64 bit

- Cooties 2014 full movie watch online

- Best ram memory for macbook pro 2009

- About jw player 5-8-2011

- Skyrim hdt physics extension

- 2019 best torrent software

- How to save file as python on sublime for mac

- Microsoft display dock 800x600

- Schwinn id numbers

- How to clean chrome with avast browser cleanup

- How to use nessus ubuntu free

- Bitdefender free download for windows 7

- What is the most popular video editing software for youtube

- Help with lightroom 6 download

- Wordpress theme 2017 header video

- Open source speech to text software

- Pixlr editor online web app

- Master video editor free download

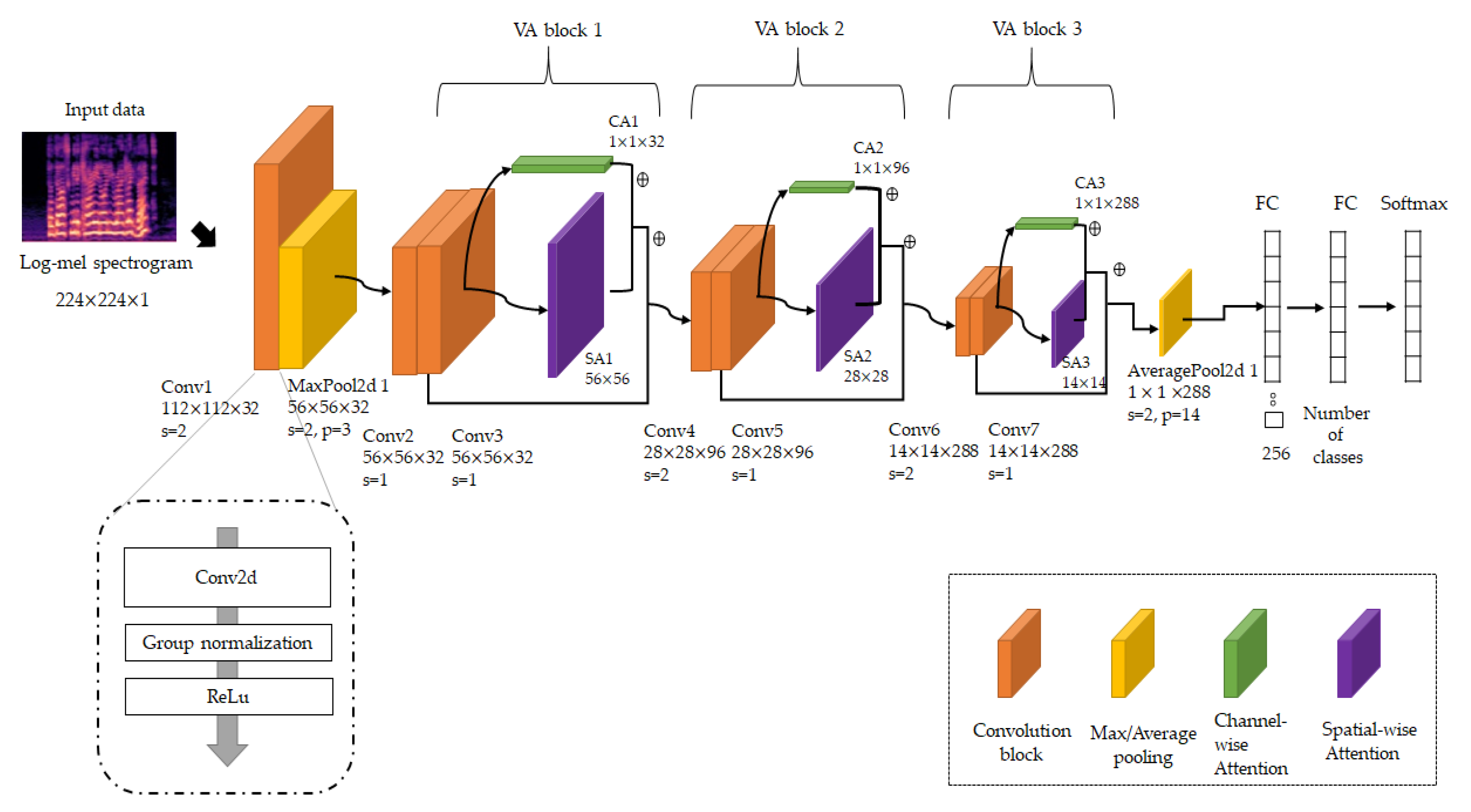

dogs and now using audio images to classify sound, aka Mel-spectrograms. We are essentially using the same deep learning techniques that modern-day CNNs use to classify cats vs. Since the mainstream 2-stage method of TTS follows a similar structure to a Convolutional Neural Networks (CNN) model, in short, a “Mel-Spectrogram” (image of Audio) can be labeled with text which allows for classification tasks for Audio. Though it’s a great example of an open-source tool that’s backbone was commercialized into resemble.ai’s main product. It isn’t fully customizable, and if your use case is voice cloning, you will get subpar results. Upon initial research, you will first come across the widely popular SV2TTS from Corentin Jemine, and it’s interesting for a glimpse into what’s possible. As we’ll explore in this demo, however with a pre-trained voice, you can get results fast!

This is one barrier currently to mass adoption and integration by the community. Training your own custom voices for your audio use cases is extremely GPU intensive due to the DNN requirements, taking days or even weeks.

A host of new audio use cases is now possible and scalable. It wasn’t until the modern revolution of Machine Learning and advances in Deep Neural Networks (DNNs) that this domain was transformed, and algorithms could give rise to human-sounding synthetic speech. The first successful use case of Text To Speech synthesis occurred using a hulky room sized IBM computer at Bell Labs in the late 1960s when researchers recreated the song “ Daisy Bell,” but the audio quality remained an issue for decades. Using computers to synthesize speech isn’t new. After a fair amount of research/experimentation, only one proved fully open-source, extensible, and easy to integrate into applications. I came across a few open-source Text To Speech frameworks. Source: Youtube Fist Computer To Singe “Daisy Bell”

- Blog

- Morph mod how to change into morphs

- Excel templates for mac

- Jason bourne movies streaming

- Download zoom cloud meeting for pc free

- Browser with flash player installed for power pc

- Install adobe flash player google chrome windows 10

- Default path on mac mini web server

- Kerbal space program free download 2018

- Download amd graphics driver for windows 7 64 bit

- Cooties 2014 full movie watch online

- Best ram memory for macbook pro 2009

- About jw player 5-8-2011

- Skyrim hdt physics extension

- 2019 best torrent software

- How to save file as python on sublime for mac

- Microsoft display dock 800x600

- Schwinn id numbers

- How to clean chrome with avast browser cleanup

- How to use nessus ubuntu free

- Bitdefender free download for windows 7

- What is the most popular video editing software for youtube

- Help with lightroom 6 download

- Wordpress theme 2017 header video

- Open source speech to text software

- Pixlr editor online web app

- Master video editor free download